Biotechnology used to advance by exhausting one bottleneck at a time. A target would be selected, a screening campaign would be launched, a chemistry program would inch through analog series, and only much later would formulation, delivery, and clinical behavior begin to assert themselves as governing realities. Artificial intelligence has altered that sequence by allowing discovery teams to ask multi-layered questions at the outset rather than at the end. Instead of treating molecular design, developability, and delivery as separate departments of thought, AI increasingly treats them as coupled features of one biological engineering problem. That change matters because disease itself does not unfold in compartments; it unfolds as structure, dynamics, context, and response.

The most consequential shift is not simply speed, though speed is plainly part of the story. It is the movement from catalog-based discovery toward generative discovery, in which models do not merely rank what already exists but propose what ought to exist under a set of biological and physicochemical constraints. That is a deeper epistemic change than the marketing language around AI sometimes admits. In practical terms, it means medicinal chemistry, protein engineering, antibody optimization, and nanomedicine formulation are beginning to share a common computational grammar. In scientific terms, it means representation learning has become a new instrument for probing druggability.

What makes this especially powerful in biotech is that biology rarely rewards single-variable thinking. A molecule is not judged only by affinity, an antibody is not judged only by epitope recognition, and a nanoparticle is not judged only by loading efficiency. Each therapeutic entity must survive a gauntlet of conformational, pharmacologic, immunologic, and manufacturing demands before it can become a product rather than a paper. AI is useful here precisely because it can absorb heterogeneous signals and learn patterns that human intuition alone would struggle to keep in simultaneous view. The result is not the replacement of scientific judgment, but its expansion into design spaces too large for unaided reasoning.

That expansion is now shaping four of the most technically important arenas in therapeutic development: small-molecule design, antibody engineering, protein binder creation, and nanoparticle formulation. These fields once evolved with partially separate tools, separate cultures, and separate assumptions about what counted as a successful candidate. AI is pulling them into closer conceptual alignment by making structure prediction, generative design, and closed-loop optimization available across modalities. Accordingly, the modern drug discovery pipeline looks less like a linear funnel and more like a recursive system in which each design choice can be interrogated against downstream biological consequences. That systems logic begins most visibly with the small molecule, the oldest therapeutic form now being redesigned by the newest computational methods.

Generative Chemistry

Small-molecule discovery has always suffered from an asymmetry between the size of chemical possibility and the size of experimental reality. The number of plausible compounds is vast, but the number that can be meaningfully synthesized, screened, and advanced is severely limited by time, money, and attrition. Classical virtual screening improved the situation by reducing blind experimentation, yet it largely remained a filtering exercise imposed on preexisting libraries. Generative AI changes the posture of the field by allowing models to construct candidate chemotypes under explicit design objectives rather than merely sort them after the fact. In that sense, the center of gravity has moved from retrospective selection to prospective invention.

The technical engines behind that shift are diverse, but they are united by a common ambition: to encode chemistry as a navigable design language. Recurrent architectures taught the field that molecular strings could be generated in a rule-like manner, variational autoencoders introduced continuous latent spaces for property-guided interpolation, transformers brought long-range contextual reasoning into molecular optimization, and diffusion models extended design into three-dimensional, target-aware generation. What used to be a medicinal chemist’s intuition about scaffold modification is increasingly being reframed as conditional sampling over molecular representations. The result is not a magical replacement for synthesis or assay work, but a more disciplined way of deciding what deserves synthesis and assay work. This is where AI begins to function as a theory of prioritization rather than merely a tool of acceleration.

The most interesting scientific consequence is that multi-objective optimization has become native to early design. A compound can now be proposed with target engagement, physicochemical tractability, selectivity logic, and developability features considered together rather than sequentially. That matters because many historical drug discovery failures were not failures of activity alone; they were failures of balance. Molecules often succeeded at one property only to unravel under the next layer of biological scrutiny. AI-driven chemistry tries to reduce that cascade by treating molecular design as a constrained negotiation among competing demands from the outset.

Even so, the small molecule remains a stern teacher of humility. Models can learn medicinal patterns, but they do not abolish the stubborn realities of synthesis, metabolism, protein flexibility, or human translation. A beautifully scored compound may still be synthetically awkward, mechanistically brittle, or biologically provincial once it encounters the messiness of living systems. The most mature programs therefore use AI not as an oracle, but as the front end of an iterative experimental loop in which design, validation, and redesign continuously inform one another. From there, the logic of computational design extends naturally into biologics, where the challenge is no longer just choosing atoms, but choosing surfaces, loops, and recognition landscapes.

Programmable Antibodies

Antibody engineering has always confronted a paradox of abundance. The immune system can generate an immense range of variable regions, yet therapeutic development must isolate the rare subset that binds the right epitope, adopts the right conformation, avoids problematic liabilities, and behaves well as a manufacturable drug. Traditional discovery methods, from hybridoma workflows to display technologies, are powerful but empirically heavy. AI intervenes by making sequence, structure, and interface prediction part of one design continuum rather than separate analytical exercises. This has turned antibodies from screened biological accidents into increasingly programmable molecular objects.

A major reason this is now possible is the maturation of structural prediction. Models specialized for antibodies have become markedly better at inferring framework geometry, complementarity-determining region behavior, and the difficult conformational logic of the heavy-chain third loop. That improvement matters because antibody potency is not simply a question of possessing the right residues; it is a question of presenting them in a geometry that a real antigen can engage under real thermodynamic conditions. Structure prediction therefore does more than decorate sequence analysis with visuals. It changes the level at which the field can reason about affinity maturation, epitope access, and developability before experimental campaigns grow expensive.

Language models have added a second layer of capability by learning the statistical grammar of antibody repertoires. Once sequence space is treated as something models can navigate rather than merely archive, antibody optimization becomes less dependent on brute-force library expansion and more dependent on informed mutation proposals. This is a subtle but profound transition. It means the field can begin to ask not only which antibody sequences exist in nature, but which sequences are evolutionarily plausible, structurally coherent, and therapeutically useful under human-imposed constraints. In practice, this opens the door to humanization, affinity maturation, and developability tuning within a computational framework that still respects the biological texture of antibody sequence space.

The deeper significance is that antibody design is moving away from a purely reactive model of discovery. Instead of waiting for immunization, screening, and serendipity to reveal a viable binder, teams can now define an antigenic problem with increasing precision and generate candidate solutions around it. That shift does not eliminate the need for wet-lab rigor; if anything, it makes that rigor more strategically valuable by concentrating experiments around better initial hypotheses. It also creates a conceptual bridge to the broader field of non-antibody binders, where design freedom is even greater and the constraints of classical immunoglobulin architecture no longer dominate the search space. There, AI is not merely refining nature’s repertoire; it is beginning to write new ones.

Designed Binders

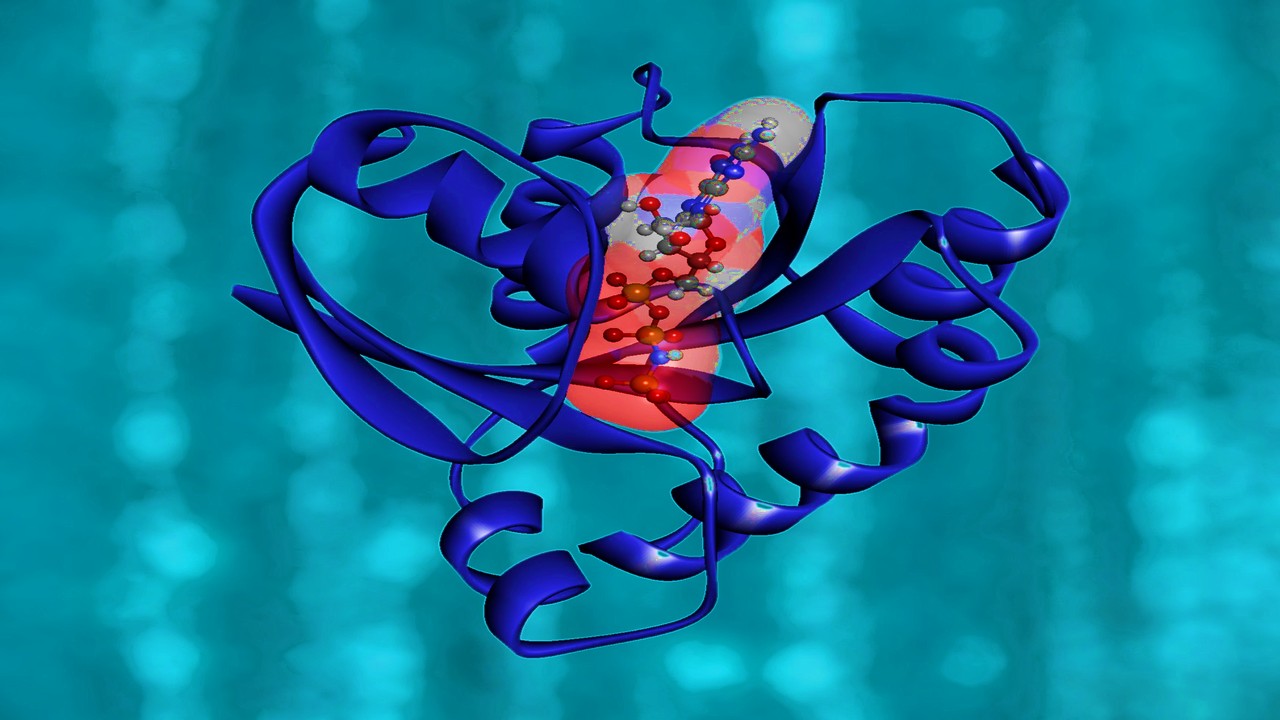

Protein binders occupy a fascinating middle ground between chemistry and biology. They inherit the molecular specificity associated with biologics, yet they are not limited to the canonical architecture of antibodies, and that freedom makes them unusually attractive for difficult targets. Historically, however, de novo binder design was constrained by the sheer difficulty of predicting which backbone shapes, amino acid sequences, and interface geometries would actually fold and bind as intended. AI has transformed this field by letting researchers generate protein structures, optimize sequences, and evaluate foldability in a coordinated workflow. In effect, the discipline has moved from sculpting proteins through partial intuition to drafting them through probabilistic design.

Diffusion-based protein design has been especially important because it treats structure generation as a controlled denoising problem rather than a sequence of handcrafted heuristics. That framing allows binder backbones to be conditioned on target geometry, epitope shape, or motif constraints with striking precision. Sequence design tools then populate those backbones with amino acids likely to stabilize the intended fold and interface, while structure predictors help assess whether the design appears internally credible before it ever reaches a purification column. This three-part logic—generate, sequence, filter—has made binder design far more modular and experimentally tractable. It also clarifies that successful protein engineering is increasingly an exercise in coordinating multiple learned models rather than relying on one master algorithm.

The scientific excitement here lies in what binders can reach. Many disease-relevant targets resist traditional small molecules because their surfaces are broad, dynamic, or insufficiently pocketed, while some are poorly served by full-length antibodies because of size, delivery, or format constraints. Designed binders offer a way into this territory by permitting compact, highly specific recognition motifs tailored to a particular surface logic. The recent literature has shown that AI-guided binder design is no longer confined to proof-of-principle demonstrations; it is becoming a legitimate route for constructing recognition molecules against viral proteins, difficult epitopes, and previously stubborn biological interfaces. This marks a serious widening of the druggable landscape, not merely a cosmetic expansion of computational ambition.

Yet designed binders also reveal the next frontier of computational therapeutics: dynamic biology. A protein may look persuasive in a predicted complex and still fail under the real-time demands of conformational plasticity, solvent exposure, intracellular context, or manufacturability. The future of this field will therefore depend on connecting static structural excellence to kinetic behavior, cellular persistence, and translational practicality. That same demand for multiscale reasoning is already reshaping how delivery systems are built, because even the most elegant therapeutic design remains incomplete until someone solves the question of where it goes, what it carries, and how it behaves in tissue. That is where AI leaves the level of molecular recognition and enters the engineering of delivery itself.

Intelligent Delivery

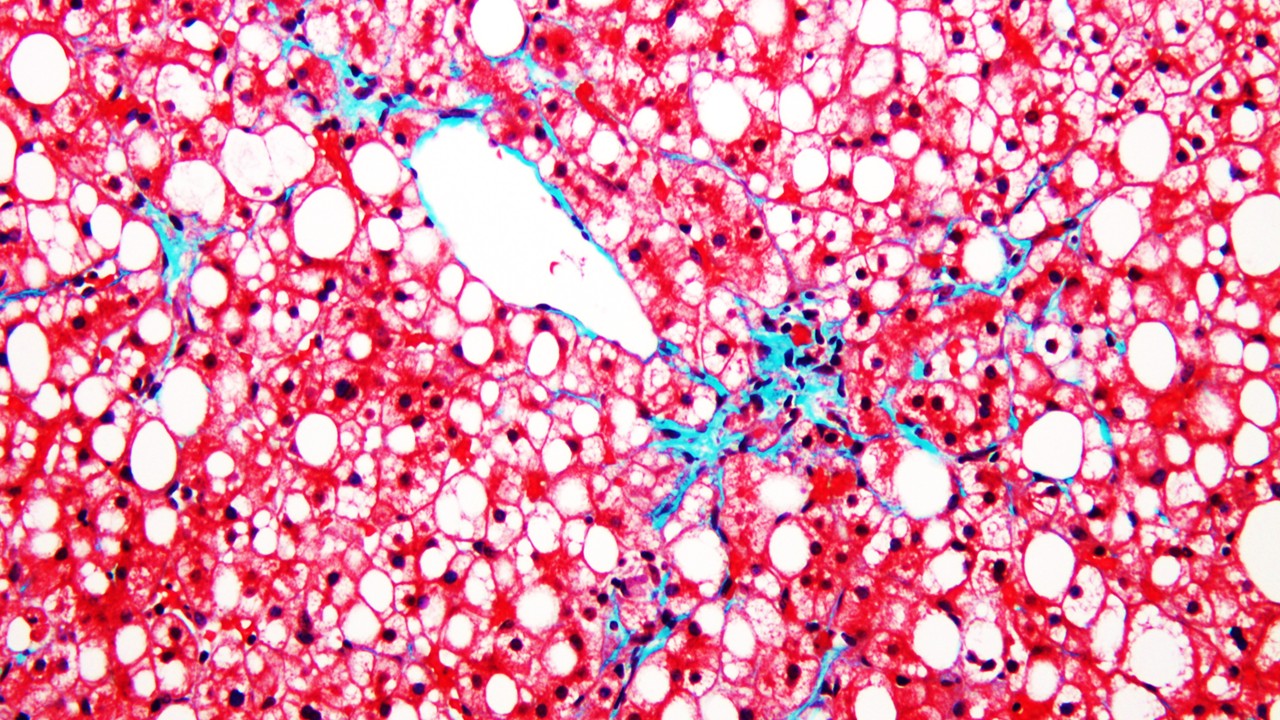

Nanoparticle engineering is often described as a formulation problem, but that description is too modest for what the field has become. In reality, nanoparticle design sits at the intersection of colloid science, interfacial chemistry, pharmacokinetics, immunology, and device-like systems optimization. A delivery particle must negotiate size, surface properties, cargo protection, release behavior, tissue tropism, and biological tolerance, all while remaining manufacturable and reproducible. AI is particularly well suited to this environment because nanoparticle performance emerges from interacting variables rather than from one dominant parameter. The field therefore benefits from models that can reason across coupled design dimensions rather than isolate them one by one.

What has changed is that formulation is no longer just something optimized after a payload is chosen. In the most sophisticated programs, delivery design is becoming part of therapeutic conception itself. Machine learning models can relate particle composition and process conditions to uptake, biodistribution, and functional output, allowing developers to ask much earlier whether a given platform is likely to behave in the desired tissue context. This is especially important for nucleic acid medicines and other modalities whose success depends less on intrinsic target affinity than on the precise choreography of encapsulation, trafficking, release, and endosomal escape. AI thus turns formulation from a late-stage salvage operation into an early-stage design science.

The most compelling direction is the emergence of hybrid discovery systems in which predictive models, automated experimentation, and biological testing operate in a closed loop. In such systems, the model is not merely trained once and deployed repeatedly; it is refined by the consequences of its own design proposals. That architecture matters because delivery platforms are notoriously context dependent. A nanoparticle that behaves elegantly in one assay system may behave indifferently in another, and human translation has often punished the field for overinterpreting early signals. Closed-loop AI offers a way to make formulation science more cumulative, more self-correcting, and less vulnerable to the brittle optimism that has long haunted nanomedicine.

This is also the point at which the larger meaning of AI in biotech comes into focus. The technology is not simply making each modality faster in isolation; it is creating a common engineering language across discovery, design, optimization, and product development. Small molecules, antibodies, binders, and nanoparticles now look less like separate verticals and more like interoperable layers of one computationally assisted therapeutic enterprise. The next decisive advances will likely come from that convergence, where target biology, molecular design, and delivery architecture are optimized as a connected system rather than as a relay race of specialized silos. If the older era of biotech was defined by finding candidates, this one is increasingly defined by composing therapeutic systems.

Study DOI: https://doi.org/10.1002/mco2.70317

Engr. Dex Marco Tiu Guibelondo, B.Sc. Pharm, R.Ph.,B.Sc. CompE

Editor-in-Chief, PharmaFEATURES

Subscribe

to get our

LATEST NEWS

Related Posts

Medicinal Chemistry & Pharmacology

Spatial Collapse: Pharmacologic Degradation of PDEδ to Disrupt Oncogenic KRAS Membrane Localization

PDEδ degradation disrupts KRAS membrane localization to collapse oncogenic signaling through spatial pharmacology rather than direct enzymatic inhibition.

Medicinal Chemistry & Pharmacology

Neumedics’ Integrated Innovation Model: Dr. Mark Nelson on Translating Drug Discovery into API Synthesis

Dr. Mark Nelson of Neumedics outlines how integrating medicinal chemistry with scalable API synthesis from the earliest design stages defines the next evolution of pharmaceutical development.

Medicinal Chemistry & Pharmacology

Exelixis Clinical Bioanalysis Leadership, Translational DMPK Craft, and the Kirkovsky Playbook

Senior Director Dr. Leo Kirkovsky brings a rare cross-modality perspective—spanning physical organic chemistry, clinical assay leadership, and ADC bioanalysis—to show how ADME mastery becomes the decision engine that turns complex drug systems into scalable oncology development programs.

Read More Articles

Factory Logic: Modular Pharmaceutical Manufacturing Enabling Rapid Drug Production and Continuous Innovation

Modular pharmaceutical factories transform drug manufacturing into a continuously evolving technological system capable of integrating new therapies, processes, and production innovations without disrupting regulated operations.

Zentalis Pharmaceuticals’ Clinical Strategy Architecture: Dr. Stalder on Data Foresight and Oncology Execution

Dr. Joseph Stalder of Zentalis Pharmaceuticals examines how predictive data integration and disciplined program governance are redefining the future of late-stage oncology development.

Policy Ignition: How Institutional Experiments Become Durable Global Evidence for Pharmaceutical Access

Global pharmaceutical access improves when IP, payment, and real-world evidence systems are engineered as interoperable feedback loops rather than isolated reforms.

Sepsis Shadow: Machine-Learning Risk Mapping for Stroke Patients with Bloodstream Infection

Regularized models like LASSO can identify an interpretable risk signature for stroke patients with bloodstream infection, enabling targeted, physiology-aligned clinical management.