The Genesis of RNA-Guided Editing

The journey of CRISPR/Cas9 from bacterial immunity to a programmable gene-editing platform represents one of modern biology’s most profound transformations. Initially identified in Escherichia coli as clustered repeats separated by unique spacers, CRISPR was once considered a genomic curiosity. It wasn’t until the early 2000s that scientists understood its defensive logic—a molecular memory storing fragments of viral invaders for later recognition. The CRISPR-associated (Cas) proteins, particularly Cas9, act as the executioners of this adaptive immune response, cleaving foreign genetic material upon re-encounter. The architecture of this system, with its leader sequences, repeats, and spacers, set the stage for one of the most flexible gene-editing tools in existence.

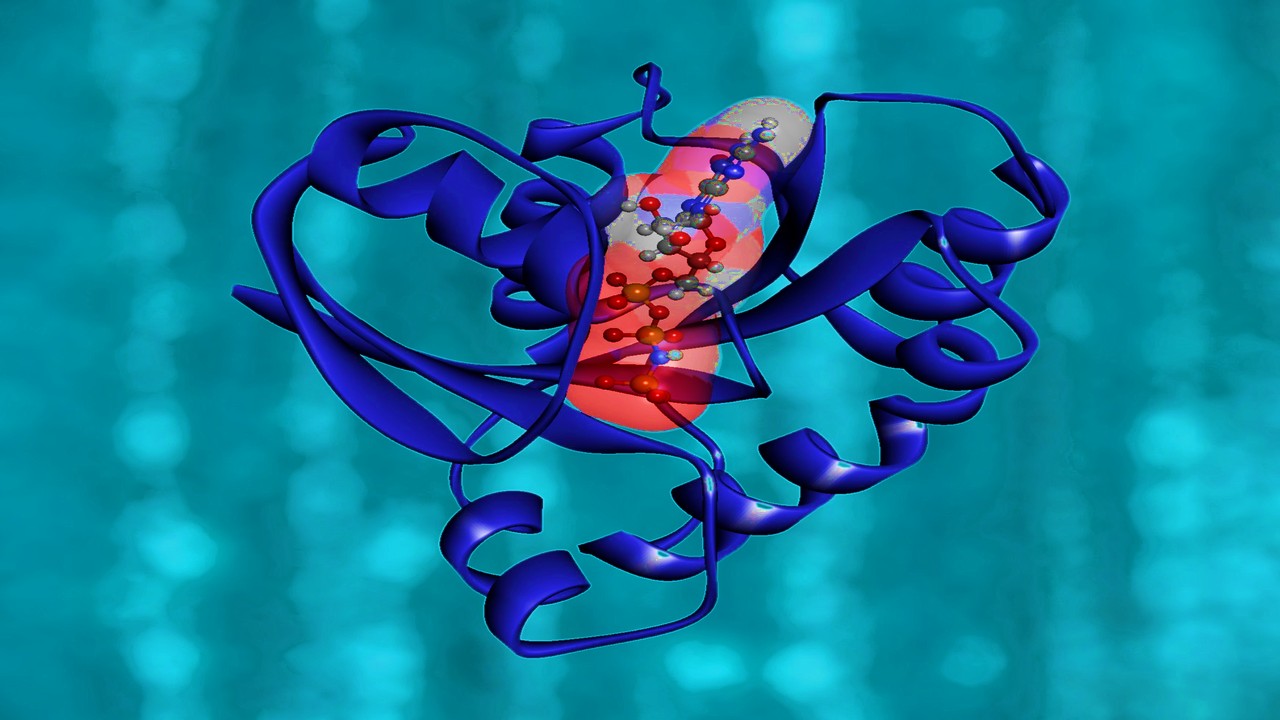

By 2012, CRISPR/Cas9 had been reprogrammed from a natural defense mechanism into a genetic scalpel. Researchers condensed the dual RNA system—crRNA and tracrRNA—into a single, synthetic guide RNA (gRNA), allowing Cas9 to cut any DNA sequence complementary to its 20-nucleotide recognition site. This compact re-engineering enabled an unprecedented simplicity: directing Cas9 to a gene became as easy as designing a short RNA sequence. However, this simplicity came with a caveat. Even the slightest mispairing between gRNA and DNA could result in off-target edits, prompting an era of intense optimization. Each iteration of gRNA design revealed the delicate balance between flexibility and fidelity in genome editing.

The initial gRNA models were largely empirical, derived from the observation that mismatches near the protospacer-adjacent motif (PAM) drastically reduced cutting efficiency. As experiments accumulated, the molecular choreography of gRNA-DNA hybridization became better understood. Researchers realized that nucleotide composition, folding stability, and even the promoter driving gRNA transcription influenced accuracy. Thus began the computational revolution in CRISPR: the effort to mathematically model and predict the biological nuances of gRNA performance. The discipline merged biology with informatics, shifting gene editing from trial-and-error into algorithmic design.

Over time, the refinement of gRNA structures mirrored the maturation of CRISPR as a scientific ecosystem. It became clear that editing efficiency wasn’t a single-variable problem but a multi-dimensional interplay of structural, energetic, and contextual features. The story of CRISPR precision, therefore, is not merely the improvement of a molecular tool but the evolution of computational reasoning embedded in biology’s most programmable code.

From Trial to Algorithm: The Birth of Predictive gRNA Models

The earliest attempts to optimize gRNA were heuristic, guided by biochemical intuition rather than data-driven certainty. Researchers learned that guanine enrichment and secondary structure minimization could improve cleavage efficiency, but quantifying these factors was elusive. Enter machine learning—an emergent ally capable of translating sequence data into predictive frameworks. By encoding nucleotides numerically, algorithms could infer statistical relationships between sequence features and editing outcomes. Each model iteration built on vast repositories of experimental results, transforming biological unpredictability into probabilistic clarity.

Linear and logistic regression models laid the foundation. Tools like CRISPRScan and Broad GPP Designer used simple correlations between sequence features and observed activity to predict gRNA efficacy. Although primitive by current standards, these models were revolutionary in defining sequence rules—position-specific nucleotide contributions, GC-content thresholds, and PAM-proximity dependencies. The recognition that certain seed sequences were indispensable for target binding established the first generation of computational filters for CRISPR design. Yet, while these algorithms identified productive patterns, they remained limited by human-defined assumptions.

Machine learning deepened its role as more complex architectures emerged. Decision Trees and Random Forest models, such as CRISTA and Elevation, expanded the computational horizon, integrating thermodynamic parameters and mismatch classifications. These models could weigh multiple sequence features simultaneously, generating composite predictions that mirrored biological complexity. Support Vector Machines, adopted in WU-CRISPR and SgRNAScorer, pushed this frontier further by mapping nonlinear relationships between sequence variations and cutting efficiency. Collectively, these models represented biology’s transition from observation to abstraction—a computational embodiment of natural selection within algorithmic space.

Each refinement reflected a growing awareness that biological systems are neither linear nor random but probabilistically ordered. Off-target effects, once thought to be uncontrollable artifacts, became predictable entities through iterative learning. With larger datasets and better encodings—k-mer embeddings, positional weights, and motif analyses—gRNA design entered an era of predictive precision. The refinement was not only mathematical but philosophical: the transition from designing with biology to designing through computation.

This intersection of informatics and molecular biology redefined what it meant to “design” life. Predictive models replaced empirical recipes, and CRISPR scientists became data architects, constructing algorithms that evolve as their biological counterparts do. The revolution was no longer just genetic—it was algorithmic.

Neural Networks and the Cognitive Turn in CRISPR Design

As gRNA datasets ballooned in size and complexity, traditional machine learning reached its interpretive limits. Neural networks, inspired by the architecture of cognition itself, offered a framework to capture nonlinearities hidden within biological sequences. Deep neural networks (DNNs) could ingest millions of nucleotide patterns, discover latent motifs, and predict outcomes without explicit feature definitions. With the introduction of DeepCRISPR in 2018, CRISPR design entered its neural era—a shift analogous to the leap from arithmetic to language.

Convolutional Neural Networks (CNNs) revolutionized gRNA evaluation by converting RNA sequences into numerical matrices interpretable as one-dimensional “images.” In this configuration, convolutional filters scanned across the nucleotide landscape, identifying motifs that correlated with activity or instability. CNN-based models, such as CNN_std, transcended the need for manual feature extraction. Their self-learning nature allowed them to uncover structural dependencies and PAM-adjacent nuances that even expert-designed features had overlooked. The ability to integrate noise-tolerant architectures further distinguished DNNs from conventional algorithms, making them ideal for messy biological datasets.

Recurrent Neural Networks (RNNs), particularly their bidirectional variants, added temporal memory to sequence analysis. By treating gRNA as a sequential narrative rather than a static code, RNNs could capture dependencies between distant nucleotides—interactions crucial for secondary structure formation. The DeepHF framework exemplified this advance, combining biological insight with neural memory to optimize for both efficiency and fidelity. It was no longer enough for a gRNA to cut; it had to do so precisely, reproducibly, and predictably across contexts.

Yet, neural models faced a philosophical problem: opacity. Their interpretive mechanisms, buried in millions of parameters, made biological validation difficult. Researchers responded with hybrid architectures like AttCRISPR, which employed attention mechanisms to visualize decision weights—essentially teaching algorithms to explain themselves. This was a cognitive leap for CRISPR computation: networks not only learned how to predict but also why. The union of interpretability and intelligence brought computational biology closer to a transparent form of reasoning, where prediction and explanation coexist.

As neural networks matured, their predictive power extended beyond efficiency scoring to contextual adaptability. They began accounting for chromatin accessibility, epigenetic modifications, and species-specific promoter interactions. The gRNA became not just a sequence but a contextualized instruction, embedded within the living architecture of the cell. Through neural computation, CRISPR systems evolved from molecular tools into adaptive intelligences of biological design.

Evaluating Precision: Metrics as Biological Truth

The refinement of gRNA design would be meaningless without rigorous evaluation. Metrics like accuracy, Spearman correlation, and the Area Under the Receiver Operating Characteristic (AUROC) curve emerged as the quantitative conscience of CRISPR modeling. Each metric translated the abstract relationship between predicted and observed activity into interpretable biological truth. The challenge was to balance precision and sensitivity—to ensure that models did not merely classify gRNAs correctly but understood their biological behavior under diverse conditions.

Accuracy, while intuitive, often masked subtleties in performance. A model might classify most gRNAs correctly yet fail to distinguish between moderate and high-activity sequences, leading to misleading confidence. Spearman correlation addressed this by ranking predicted versus experimental efficiencies, revealing whether models captured the ordering of biological outcomes rather than their absolute values. High Spearman coefficients indicated that a model understood not just what worked, but how well it worked relative to others—a subtle but critical dimension in predictive biology.

AUROC and AUPRC metrics further dissected this performance landscape. They quantified a model’s ability to separate true positives (functional gRNAs) from false positives (off-target-prone sequences). In the context of gene therapy, where unintended edits could be catastrophic, these measures became the ethical backbone of CRISPR computation. The models with higher AUPRC values demonstrated a keen sensitivity to imbalanced datasets—precisely those representing rare yet biologically significant events. The evolution of metrics thus mirrored the evolution of CRISPR itself: from blunt efficiency to nuanced accountability.

What emerged from these evaluations was not merely a competition of algorithms but a framework for scientific transparency. Metrics created a shared language between computational scientists and experimental biologists. They allowed data to converse with biology, anchoring abstract predictions in empirical reality. As models grew more complex, these benchmarks ensured that predictive sophistication did not eclipse biological meaning. Precision, after all, must always serve accuracy in the service of life.

This integration of evaluative rigor reshaped CRISPR research into an iterative loop of hypothesis, computation, and verification. Each metric-driven refinement brought the models closer to mimicking biological precision, reinforcing the philosophical symmetry between measurement and meaning in the science of design.

Optimization, Hybridization, and the Future of Design Intelligence

By the dawn of the 2020s, gRNA design had evolved into a synthesis of machine intelligence and molecular logic. Hybrid models, such as CNN-XG and CRISPRpred(SEQ), bridged the interpretability of classical machine learning with the depth of neural architectures. These systems could weigh structural features, thermodynamic constraints, and genomic context simultaneously—effectively simulating the decision-making process of an experienced geneticist. The transition from single-algorithm tools to composite architectures marked the arrival of CRISPR computation’s pluralistic phase, where efficiency emerged from collaboration rather than singular design.

This hybridization was more than an engineering solution; it was an epistemological shift. Models were no longer competing but cooperating—each layer compensating for another’s blind spot. Convolutional filters detected motifs; gradient-boosted trees contextualized them; attention modules explained their relevance. Together, they created predictive organisms, capable of self-correction and adaptive inference. Such systems represent the computational parallel of biological evolution, where diversity begets resilience. CRISPR’s algorithmic ecosystem now reflects the genomic adaptability it was built to manipulate.

Looking forward, the challenge is not power but interpretation. The exponential sophistication of hybrid networks risks obscuring their biological meaning. The next frontier lies in developing models that are as transparent as they are powerful—frameworks that can articulate their logic to human scientists while maintaining computational autonomy. The future of gRNA design will depend on this equilibrium: algorithms must remain intelligible collaborators, not inscrutable oracles.

As CRISPR enters its third decade, gRNA design stands as both an artifact of molecular engineering and a testament to the evolution of scientific reasoning. It encapsulates a new paradigm where biology and computation no longer merely intersect—they coevolve. The refinement of guide RNAs mirrors humanity’s own journey toward precision: from random discovery to intentional creation, from experimental manipulation to intelligent design. In decoding life, we have begun to teach machines how to understand it.

Study DOI: https://doi.org/10.3390/biom13121698

Engr. Dex Marco Tiu Guibelondo, B.Sc. Pharm, R.Ph., B.Sc. CpE

Editor-in-Chief, PharmaFEATURES

Subscribe

to get our

LATEST NEWS

Related Posts

Bioinformatics & Multiomics

Agentic Bioinformatics: How Autonomous AI Agents Compress Biomedical Discovery Cycles

Agentic bioinformatics treats biomedical discovery as a closed-loop system where specialized AI agents continuously translate intent into computation, computation into evidence, and evidence into the next experiment.

Bioinformatics & Multiomics

Proteomic Signatures: Molecular Discrimination of Hyperinflammatory States Through Serum Proteome Architecture

Serum proteomics exposes how sepsis and hemophagocytic syndromes diverge at the level of immune regulation and proteostasis, enabling precise molecular discrimination.

Bioinformatics & Multiomics

Residual Signals: Transcriptomic Surveillance and Multi-Domain Gene-Signature Mapping for Early Breast Cancer MRD Detection

MRD detection in breast cancer focuses on uncovering functional transcriptomic and microenvironmental signals that reveal persistent tumor activity invisible to traditional genomic approaches.

Read More Articles

Spatial Collapse: Pharmacologic Degradation of PDEδ to Disrupt Oncogenic KRAS Membrane Localization

PDEδ degradation disrupts KRAS membrane localization to collapse oncogenic signaling through spatial pharmacology rather than direct enzymatic inhibition.

Neumedics’ Integrated Innovation Model: Dr. Mark Nelson on Translating Drug Discovery into API Synthesis

Dr. Mark Nelson of Neumedics outlines how integrating medicinal chemistry with scalable API synthesis from the earliest design stages defines the next evolution of pharmaceutical development.

Zentalis Pharmaceuticals’ Clinical Strategy Architecture: Dr. Stalder on Data Foresight and Oncology Execution

Dr. Joseph Stalder of Zentalis Pharmaceuticals examines how predictive data integration and disciplined program governance are redefining the future of late-stage oncology development.

Exelixis Clinical Bioanalysis Leadership, Translational DMPK Craft, and the Kirkovsky Playbook

Senior Director Dr. Leo Kirkovsky brings a rare cross-modality perspective—spanning physical organic chemistry, clinical assay leadership, and ADC bioanalysis—to show how ADME mastery becomes the decision engine that turns complex drug systems into scalable oncology development programs.

Policy Ignition: How Institutional Experiments Become Durable Global Evidence for Pharmaceutical Access

Global pharmaceutical access improves when IP, payment, and real-world evidence systems are engineered as interoperable feedback loops rather than isolated reforms.