The old fantasy in medicinal chemistry was a clean one: a single exquisitely selective ligand would strike a single pathogenic protein and disease biology would quietly comply. Complex disease never truly behaved that way, and the historical record of drug discovery has made that mismatch impossible to ignore. Tumors reroute signaling, neurodegeneration spills across protein aggregation, inflammation, and synaptic failure, and metabolic disease refuses to stay inside one receptor family or one organ system. Polypharmacology emerged from that friction, not as an admission of defeat, but as a more mature view of biology in which efficacy often depends on reshaping a network rather than silencing a node. AI has now entered that landscape as a design engine, turning multi-target drug discovery from a largely retrospective observation into a forward-looking engineering discipline.

When Selectivity Stops Being Enough

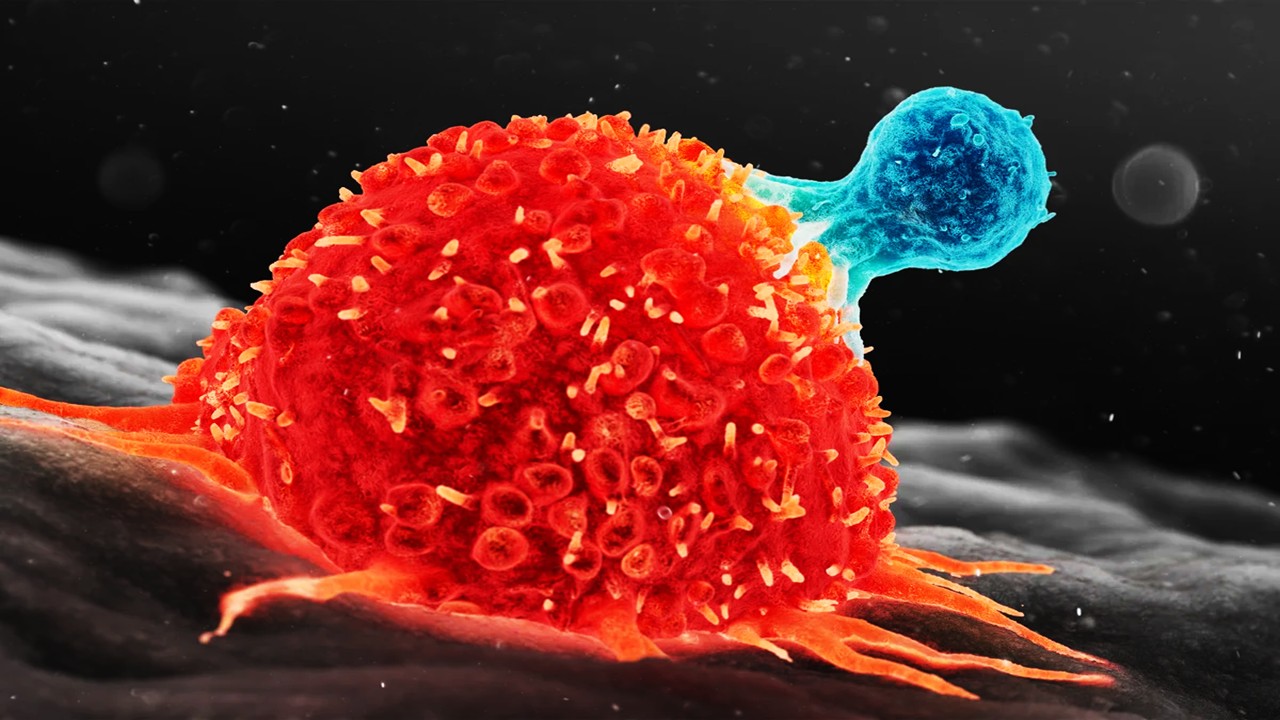

Drug discovery spent decades treating off-target activity as a design failure, yet many of the most effective small molecules turned out to work precisely because they were not as singular as theory had once demanded. In oncology, multi-kinase inhibitors succeeded not by embodying molecular purity but by intercepting convergent and compensatory signaling routes that cancer cells habitually exploit. In neurodegenerative disease, the inadequacy of one-mechanism therapeutics has been even more stark, because amyloid handling, tau biology, oxidative injury, neuroinflammation, and neurotransmitter decline do not progress as independent modules. In metabolic disease, clinical benefit increasingly comes from coordinated engagement of endocrine, inflammatory, and energy-regulating axes rather than from isolated receptor pharmacology. What looks like promiscuity at the level of receptor occupancy can, in the disease context, become precision at the level of systems behavior.

A rational multi-target agent does more than imitate combination therapy in miniature. It forces medicinal chemistry to think in terms of balanced affinity, residence time, pathway coupling, and exposure synchronization across several proteins at once. One molecule can deliver a fixed internal ratio of activities, avoiding the pharmacokinetic drift that often appears when separate drugs absorb, distribute, and clear on different schedules. That matters in diseases where timing is mechanistic rather than merely logistical, because survival signaling and adaptive resistance can emerge in the interval between uneven pharmacologic hits. The central appeal of polypharmacology is therefore not simply convenience, but temporal and mechanistic coherence.

This logic is especially compelling in resistance-prone disease states. A pathogen or tumor can often escape a highly selective agent through mutation, pathway substitution, feedback activation, or microenvironmental buffering, but the problem becomes harder when the pharmacological pressure is distributed across nonredundant vulnerabilities. The point is not to make one molecule bind everything, which would be chemically reckless, but to give it a deliberately shaped target constellation that narrows biologic escape routes. In practice, that often means combining moderate potency across several disease-relevant proteins with rigorous avoidance of anti-targets linked to toxicity. The art of modern polypharmacology is not broadness for its own sake, but selective breadth with mechanistic intent.

Once drug discovery accepts disease as a network problem, the next question becomes brutally practical: how does one choose the right nodes to hit. That is where the field leaves philosophical debate and enters computational design. The difficulty is no longer recognizing that multi-target pharmacology can be useful, but determining which combinations are synergistic, tractable, and chemically co-addressable within a single scaffold. This is the juncture at which AI becomes less of a fashionable add-on and more of an operational necessity. The molecule must now be designed not for one lock, but for a family resemblance among several locks embedded in a living system.

Teaching Models to See More Than One Target

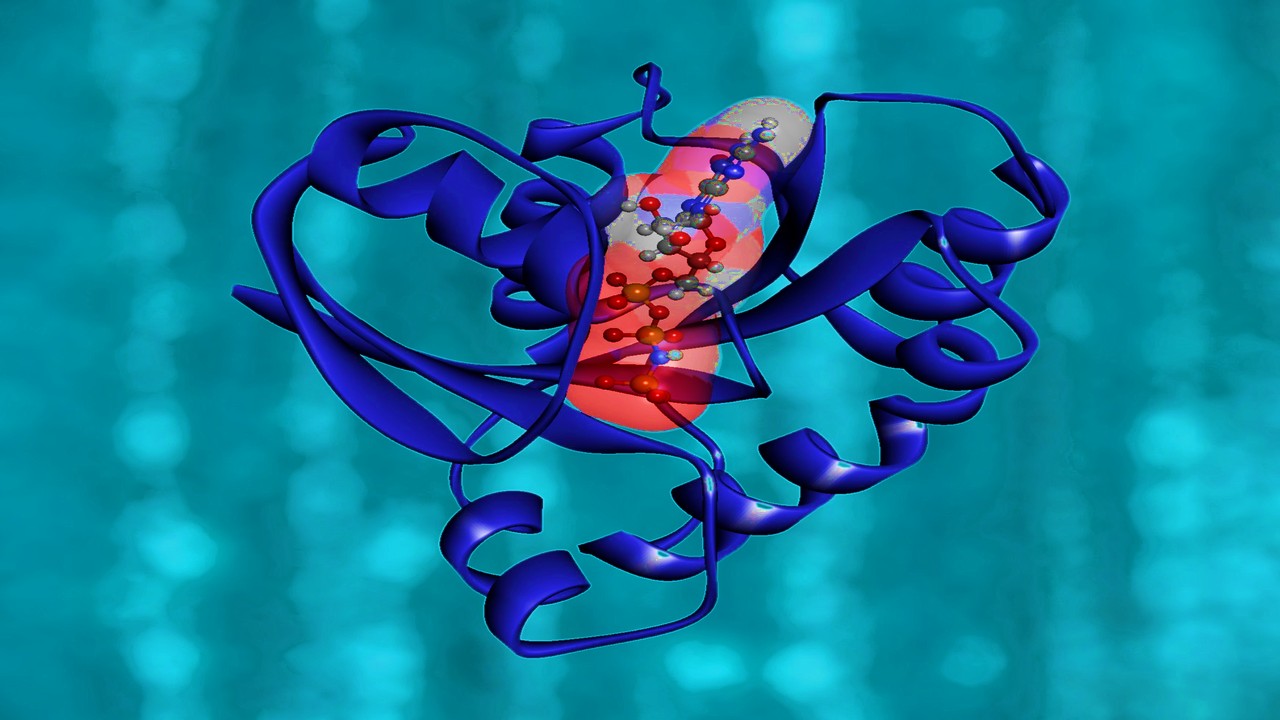

The first computational step in AI-driven polypharmacology is representational rather than generative. Models have to learn that a molecule is not merely a string, a graph, or a fingerprint, but a negotiator between multiple protein environments, each with its own steric constraints, electrostatics, hydration patterns, and tolerance for conformational compromise. Multi-task QSAR frameworks and proteochemometric models moved the field in this direction by allowing activity to be learned jointly across targets rather than in isolated assay silos. That shift matters because cross-target learning can reveal structural features that consistently support productive promiscuity while separating them from motifs that merely create noise. A scaffold ceases to be “active” in the abstract and instead becomes active in a profile.

Protein structure has deepened that profiling logic. Structure-based workflows, inverse virtual screening, and pocket-description methods now let researchers compare binding environments at scale and ask whether a candidate ligand is likely to recognize a transferable geometry across distinct targets. Similar pockets do not guarantee similar pharmacology, but they offer a physically grounded way to identify where shared ligandability may exist. That is crucial for polypharmacology, because many successful multi-target compounds are not random hybrids so much as molecules exploiting homologous microenvironments, conserved interaction hotspots, or compatible pharmacophoric abstractions. AI benefits when these pocket-level regularities are encoded rather than left implicit.

The more ambitious models now try to learn both sides of the pharmacological handshake at once. Multitask systems that predict drug–target affinity while also generating target-aware compounds are pushing toward a unified architecture in which molecular proposal and molecular evaluation are not separated into different software eras. This matters because a useful polypharmacology engine cannot merely imagine elegant chemistry; it must imagine chemistry already conditioned by plausible bioactivity. When prediction and generation share learned representations, the model begins to internalize structural compromises instead of discovering them only after synthesis. In effect, the design loop becomes less linear and more reflexive.

Generative chemistry has made that reflexivity more tangible. Chemical language models fine-tuned on ligands for paired targets have already produced de novo compounds that, after synthesis, showed activity on one or both intended proteins, with several confirmed as genuine dual modulators. That outcome is scientifically important because it suggests the model is not simply memorizing known chemotypes but learning how to merge pharmacophore logic across target classes. The impressive part is not novelty alone, but the ability to generate compounds that remain chemically coherent while satisfying multiple biological demands. In polypharmacology, novelty without mechanistic fit is decoration; these newer models are beginning to deliver both.

Yet prediction and generation alone do not tell us which target combinations deserve to be designed in the first place. A molecule can be elegantly dual-active and still be biologically trivial if the paired targets do not sit in the right disease circuitry. So the field naturally widens from ligand–protein relationships toward pathway logic, cellular adaptation, and systems-scale vulnerability. At that point, AI must stop behaving like a molecular autocomplete engine and start behaving like a network interpreter. The most consequential polypharmacology is discovered where chemical space and disease topology finally meet.

Designing Through the Disease Network

Network pharmacology changed drug discovery by insisting that disease mechanisms are distributed across interacting pathways rather than concentrated inside solitary proteins. That framework is especially valuable for polypharmacology because it helps distinguish target combinations that are merely additive from those that are mechanistically entangled. A meaningful multi-target drug often works by collapsing compensation, blocking feedback rescue, or exploiting synthetic vulnerability across parallel circuits. The question is not just whether two targets matter, but whether they matter together in a way that changes disease state more profoundly than either one alone. That is the systems-level grammar AI must learn to read.

Multi-omics data have made this grammar denser and far more informative. Genomic lesions, transcriptomic shifts, phosphoproteomic rewiring, metabolomic strain, and microenvironmental signaling can now be layered into composite maps that reveal where disease is robust and where it is brittle. In that setting, polypharmacology becomes an attempt to strike the points where cross-talk is consequential rather than merely visible. The practical power of AI lies in integrating these modalities fast enough to prioritize target constellations that a human investigator might otherwise miss or underestimate. Disease modules start to look less like lists and more like dynamic neighborhoods of liability.

Functional genomics supplies the causal test that network maps alone cannot provide. CRISPR-based screening has transformed target discovery by identifying genes and gene pairs whose disruption reveals true therapeutic dependencies, resistance circuits, or latent synergies under drug pressure. Large CRISPR-drug perturbational maps have shown that these screens can recover known sensitizing mechanisms and nominate new combinations with translational potential, turning abstract network hypotheses into experimentally ranked intervention strategies. In resistant cancers, such findings can explain why a monotherapy fails, not because the original target was irrelevant, but because a bypass route was ready and waiting.

A vivid example of this logic comes from hepatocellular carcinoma, where CRISPR-guided work identified EGFR as a synthetic lethal partner in lenvatinib resistance, linking FGFR-axis inhibition to compensatory EGFR pathway activation. That kind of result is more than a combination-therapy anecdote; it is a blueprint for polypharmacology, because it reveals a target pair connected by adaptive network logic rather than by superficial co-expression. It also demonstrates why disease context matters: the same target relationship may be decisive in one signaling architecture and unhelpful in another. AI systems trained only on generic binding data would miss that distinction unless network-state information were built into the design process. The future of multi-target small molecules depends on this merger of causal biology and chemical generation.

By this point, the conceptual shape of AI-driven polypharmacology becomes clear. It is not simply a matter of asking a model to produce compounds with two strong scores instead of one. It is a matter of encoding pathway compensation, tissue context, resistance trajectories, and target co-dependency into the same design logic that governs molecular structure. Once that ambition is stated plainly, another reality follows just as quickly: the field is powerful, but it is not yet fully trustworthy. The promise is substantial precisely because the room for error is also substantial.

The Hard Physics of Translation

AI has accelerated the discovery cycle, but it has not repealed the underlying physics and biology that make drug discovery difficult. Multi-target datasets remain uneven, often biased toward familiar protein families, favored assay formats, and well-traveled chemotypes, which means models can look smarter than they are when evaluated too close to the data that raised them. A polypharmacology predictor trained heavily on kinases may generalize poorly to receptor–enzyme pairs, transcriptional regulators, or targets with highly plastic binding sites. This is not merely a benchmarking nuisance; it shapes which diseases appear computationally tractable and which remain algorithmically underlit. Data abundance can masquerade as mechanistic truth.

There is also the problem of objective conflict. A clinically useful multi-target molecule must simultaneously satisfy potency, target balance, selectivity against anti-targets, membrane permeability, metabolic stability, synthetic accessibility, and a tolerable safety profile, all while remaining chemically elegant enough to optimize. Reinforcement learning and multi-objective generative systems can search that space more efficiently than classical workflows, but search is not the same as understanding. A reward function can tell a model what to optimize without telling it why a compound will survive the brutal filters of formulation, toxicology, and human variability. In this domain, beautiful latent-space geometry still has to answer to liver enzymes, ion channels, and formulation scientists.

Interpretability remains another unresolved pressure point. Deep models can identify patterns that medicinal chemists would never articulate in advance, which is part of their value, yet black-box success is uneasy territory when the output is meant for patients rather than competitions. Drug hunters do not only need ranked compounds; they need reasons to trust why a scaffold should bind several intended targets without drifting into liabilities that the training data underdescribed. This is especially true in polypharmacology, where therapeutic and toxic promiscuity can be separated by subtle changes in topology, pKa, lipophilicity, or conformational freedom. A model that cannot explain its balance may still be useful, but it cannot yet be sovereign.

Still, the direction of travel is unmistakable. The most advanced AI workflows are beginning to integrate target prediction, pocket similarity, network prioritization, de novo generation, and experimental feedback into iterative loops that behave less like isolated computational tools and more like adaptive design systems. As these loops absorb richer omics, CRISPR perturbation maps, and better measures of synthesis and safety, polypharmacology will look less like an exotic specialty and more like the default logic for complex disease. The real achievement will not be making small molecules less selective in a crude sense, but making them more intellectually selective about the biological complexity they are built to confront. At its best, AI-driven polypharmacology does not abandon precision medicine; it simply relocates precision from a single protein to the architecture of disease itself.

Study DOI: https://doi.org/10.3390/ijms26146996

Engr. Dex Marco Tiu Guibelondo, B.Sc. Pharm, R.Ph.,B.Sc. CompE

Editor-in-Chief, PharmaFEATURES

Subscribe

to get our

LATEST NEWS

Related Posts

Bioinformatics & Multiomics

Agentic Bioinformatics: How Autonomous AI Agents Compress Biomedical Discovery Cycles

Agentic bioinformatics treats biomedical discovery as a closed-loop system where specialized AI agents continuously translate intent into computation, computation into evidence, and evidence into the next experiment.

Bioinformatics & Multiomics

Proteomic Signatures: Molecular Discrimination of Hyperinflammatory States Through Serum Proteome Architecture

Serum proteomics exposes how sepsis and hemophagocytic syndromes diverge at the level of immune regulation and proteostasis, enabling precise molecular discrimination.

Bioinformatics & Multiomics

Residual Signals: Transcriptomic Surveillance and Multi-Domain Gene-Signature Mapping for Early Breast Cancer MRD Detection

MRD detection in breast cancer focuses on uncovering functional transcriptomic and microenvironmental signals that reveal persistent tumor activity invisible to traditional genomic approaches.

Read More Articles

Algorithmic Therapeutics: Artificial Intelligence Reshaping Biotech Drug Discovery and Product Development

Artificial intelligence is transforming biotech by making therapeutic discovery less like screening for luck and more like engineering across molecules, biologics, and delivery systems.

Factory Logic: Modular Pharmaceutical Manufacturing Enabling Rapid Drug Production and Continuous Innovation

Modular pharmaceutical factories transform drug manufacturing into a continuously evolving technological system capable of integrating new therapies, processes, and production innovations without disrupting regulated operations.

Spatial Collapse: Pharmacologic Degradation of PDEδ to Disrupt Oncogenic KRAS Membrane Localization

PDEδ degradation disrupts KRAS membrane localization to collapse oncogenic signaling through spatial pharmacology rather than direct enzymatic inhibition.

Neumedics’ Integrated Innovation Model: Dr. Mark Nelson on Translating Drug Discovery into API Synthesis

Dr. Mark Nelson of Neumedics outlines how integrating medicinal chemistry with scalable API synthesis from the earliest design stages defines the next evolution of pharmaceutical development.

Zentalis Pharmaceuticals’ Clinical Strategy Architecture: Dr. Stalder on Data Foresight and Oncology Execution

Dr. Joseph Stalder of Zentalis Pharmaceuticals examines how predictive data integration and disciplined program governance are redefining the future of late-stage oncology development.